I am seeing more and more customers and partners who actively promote the use of Azure API Management for D365 F&O backend and/or D365 Commerce Scale Unit ODATA interfacing. See for example, this blog post by Adrià Ariste Santacreu. However, there’s still a significant number of customers and partners who shrug their shoulders while thinking: “Why would we use it when we can interface ‘directly’?”. In this blog post I’ll address this question by sharing my best personal best practices in using Azure API Management for D365 F&O backend and/or D365 Commerce Scale Unit ODATA interfacing. But before sharing the best practices, we first have to explode the myth that interfacing via Azure API Management (APIM) would not be DIRECT.. 😉.

1 – The role of Azure API Management in D365 F&O interfacing

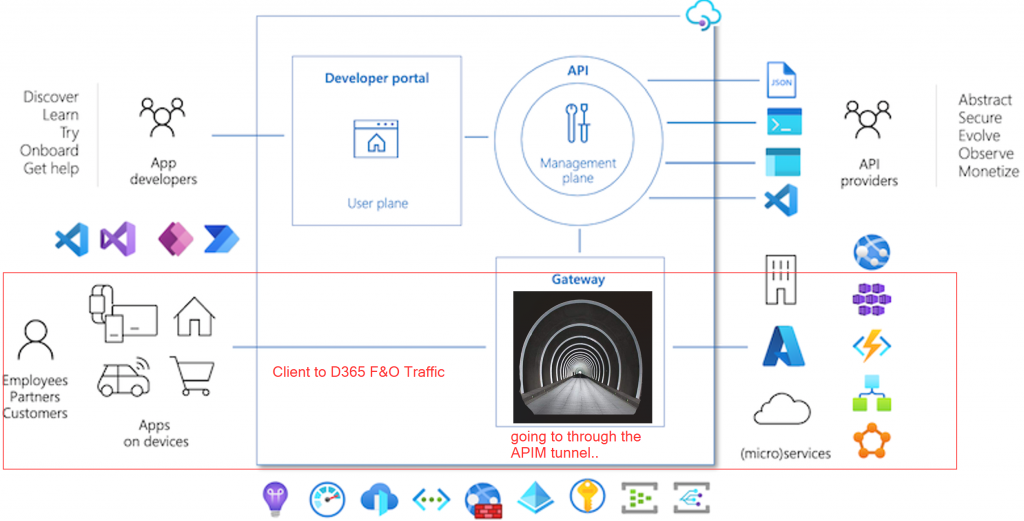

Imagine a car which is driving from A to B every day. In order to keep it on the road it’s needs to be fueled, maintained and cleaned. When this handling is done, the car is grounded and cannot move from A to B. How cool would it be if the car could drive through an advanced car wash where the fueling, maintenance and cleaning could be done while keeping it’s pace and direction on its way to B. This is actually what Azure API Management (APIM) is about: consider APIM as a gateway (a tunnel – see Figure 1 below) which interface requests are routed through on their way to a backend system.

Source: Microsoft Docs (edited)

While passing through the APIM gateway, ‘Value Added Services’ are applied to the ‘vehicle’ (=the request). These ‘VAS’ hardly slow down the vehicle, often only by about 25-100ms. These ‘VAS’ are the policies which are applied to either the incoming request, request to the backend (the 2nd part of the tunnel) and/or the equivalent responses which are routed through the same tunnel. So is the call from the client to D365 F&O still DIRECT? I’d say YES.. its only running through a tunnel of ‘VAS’… 😉.

2 – Why using APIM with D365 F&O?

For many reasons.. Let’s start by taking a few basic principles of interfacing: first Authentication. Clients which interact with D365 F&O can be very different in nature. Often it’s a non-Microsoft system backed by a partner who is not used to Microsoft platform standards. Take for example an E-commerce system and partner. It sounds strange to many but I’ve met a significant number of partners who are only used to interface with API Keys and Certificates and had never implemented oAuth 2.0 authentication before. D365 F&O would force them into oAuth 2.0 authentication which may take longer than usual for them to adopt. With APIM, APIM can take care of the authentication to D365 F&O, independent from the Client to APIM authentication.

Another example is Error handling.. Imagine if 20 different systems interact with D365 F&O where each of them has their own standard in error handling. We want STANDARDISATION and CENTRALISATION of error handling. We don’t want system A sending us e-mails when interaction with D365 F&O runs into an issue, system B offering an error on a management portal and system C not even surfacing an error (we have to find out in D365 F&O that we’re missing data..). With APIM, we can configure APIM to report errors to Azure App Insights which can source as a baseline for centralised error management.

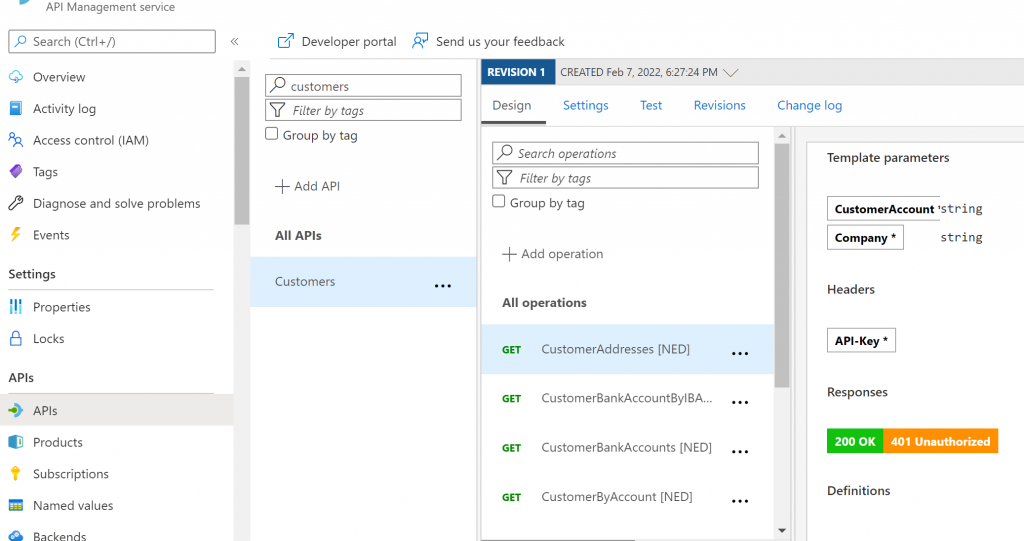

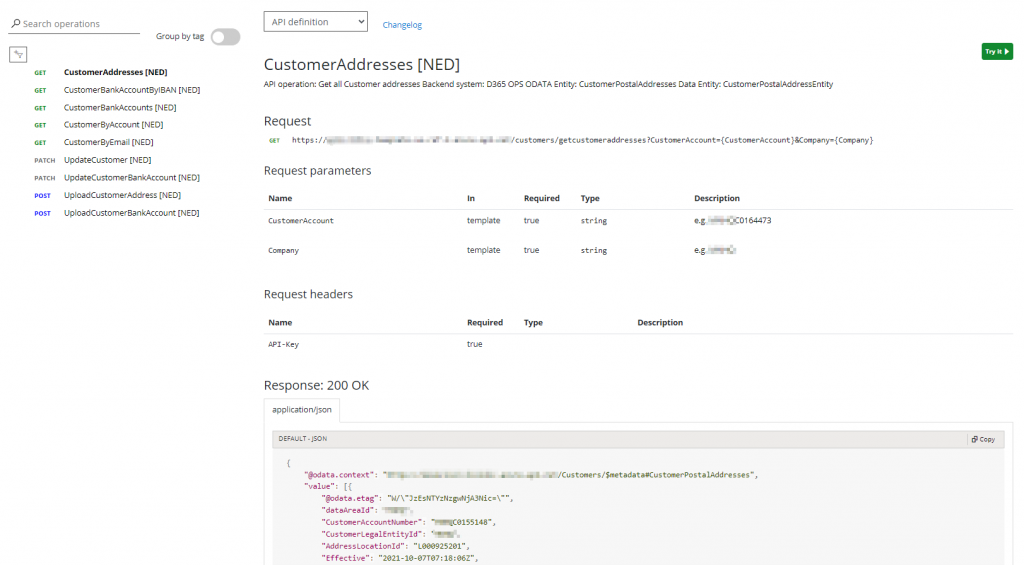

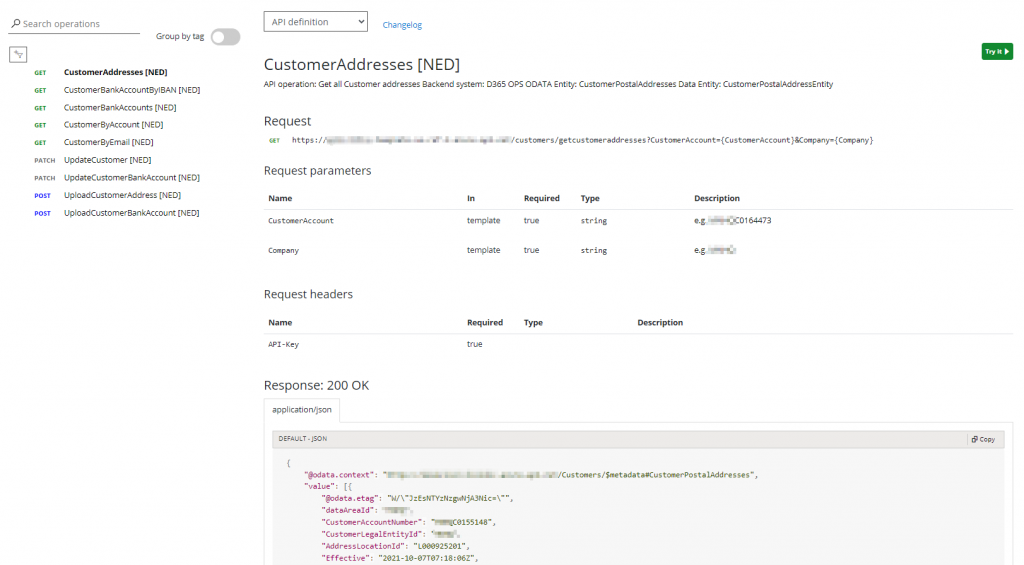

My last example is API documentation. As we all know, neither the D365 F&O backend ODATA end points nor the D365 Commerce Retail Server ODATA end points are Open API or Swagger documented. When we configure APIs with their Operations in APIM, we can include all properties of the APIs including sample requests and all possible status code/response body pairs:

These properties are automatically published to a Developer portal with all information about our APIs. We can empower IT Partners and other stakeholders to become self-sufficient in consuming our APIs by allowing them access to the portal information for some or all APIs we’ve technically documented in APIM:

In the next section I’ll share my personal best practices. I’ll already tell you this: you’ll be able to distill a lot of benefits for use in D365 F&O/D365 Commerce Scale Unit interface scenarios from them.

3 – My best practices in using APIM for D365 F&O and D365 Commerce Scale Unit integrations

There are many things to share in regards to APIM specific best practices. Below categorisation is narrowed down to my personal best practices in using APIM for D365 F&O and Commerce ODATA end point integrations specifically:

- Abstraction

- Backend system

- Environment

- Template parameters

- Security

- Template parameters

- Strip query parameters

- Mask backend URL

- Authentication

- Decouple client to APIM vs APIM to backend authentication

- Auto rotate, cache and re-use oAuth2.0 bearer tokens

- Performance

- Retry policy

- Caching

- Flexibility

- Re-pointing clients to another D365 environment in under 10 seconds

- Using APIM end points as opposed to the D365 F&O connector in Power Automate or Power Apps

- API Versioning

- API Documentation

- Developer portal

- Documentation in Power Automate – Logic Apps – Power Apps

3.1 – Abstraction

Azure API Management acts as a facade, a shell, for your system end points – If implemented well, this shell actually turns your technical end points into organizational Services which can easily be consumed by internal users, internal applications and partner applications. This is achieved as follows:

- External clients can become system agnostic:

- Clients no longer have to bother which Application a particular function originates from. Instead, the clients can directly start to consume the functions. In other words: it allows a Service Oriented Architecture (SOA).

- In the case of D365 F&O and Commerce, APIs can be exposed as Services which can easily be consumed by any App, Power Automate Flow or Backend system. These Services can be organized as per organizational standards similar to defining a custom namespace in a .NET library:

- External clients can become system agnostic (continued)

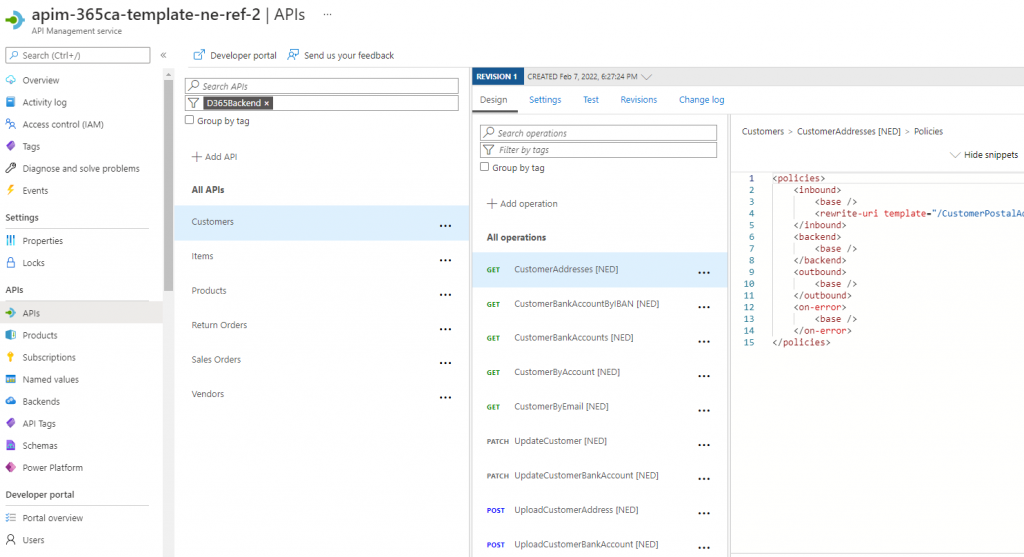

- When organising your end points as Services, the backend system becomes irrelevant. You could replace the end point by an updated end point or end point from another system and your client wouldn’t even notice it. So from client perspective a D365 specific GET {D365 environment}/data/CustomerPostalAddresses call is replaced by a generic GET {customername}.azure-api.net/customers/getcustomeraddresses. For D365 F&O, this can be achieved by implementing below redirect policy on the particular API Operation in APIM:

Policy: set backend URL suffix

API > Specific Operation > Inbound -> rewrite-uri

<inbound>

<base />

<rewrite-uri template="/CustomerPostalAddresses?$filter=CustomerAccountNumber eq '{CustomerAccount}' and dataAreaId eq '{Company}'&cross-company=true" copy-unmatched-params="false" />

</inbound>- External clients can become environment agnostic: clients no longer have to bother where a particular function comes from. Instead, the clients can directly start to consume the functions. Client requests can be re-directed to another environment by an APIM config change in seconds.

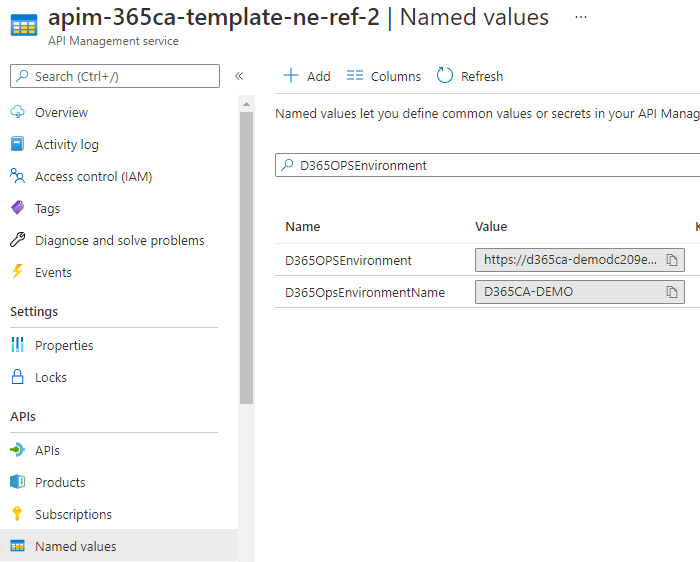

- This can be achieved by configuring a Named Value in APIM in conjunction with a Policy – In this case I put the policy on the API’s All Operations so it applies for all Operations for a particular API (here: D365 F&O entity Customers):

Named Value: D365 F&O backend URL or D365 Retail Server URL

APIM > APIs > Named Values

Policy: set backend base URL

API > All operations > Inbound -> set-backend-service base-url

<policies>

<inbound>

<base/>

<set-backend-service base-url="{{D365OPSEnvironment}}/data"/>

</inbound>

- Requests and request parameters can be simplified. For example, when searching for a customer address in D365 F&O, an ODATA call can become very complex when searching by multiple columns. In APIM, the most common searches can be standardised by defining multiple API operations which offer different sets of criteria. For example:

- API Operation 1: getaddresses -> Allows searching by street, city and zip code

- API Operation 2: getcustomeraddresses -> Allows searching by customer account

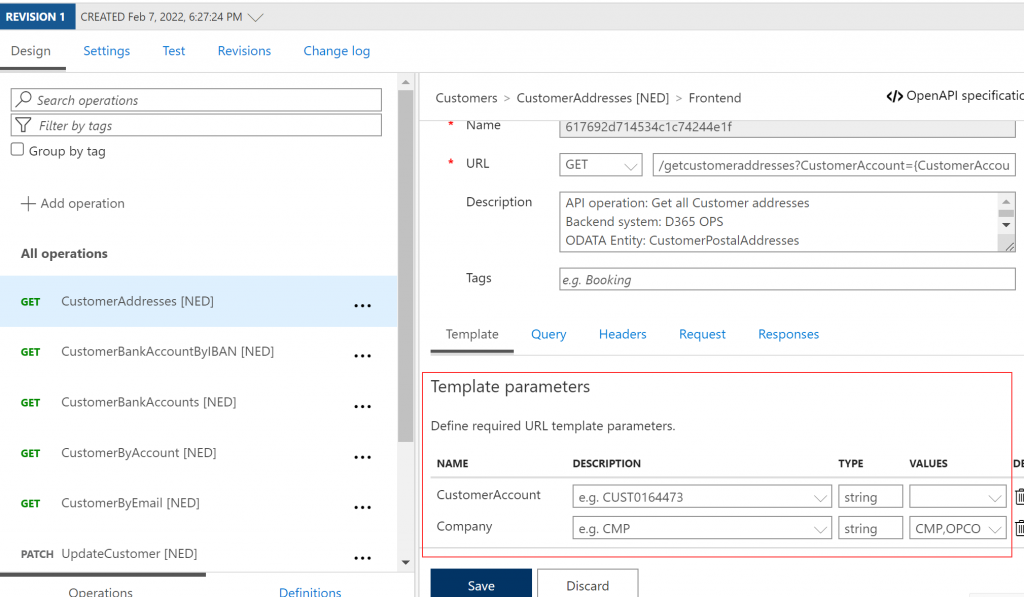

- Each specific Operation offers specific search options (APIM: Template parameters).

- The formatting of these search options can be simplified for the user, for example:

- D365 F&O request:

/CustomerPostalAddresses?$filter=CustomerAccountNumber%20eq%20’CUST0155148’%20and%20AddressLocationRoles%20eq%20’*Delivery*’&cross-company=true - Same request to D365 F&O via APIM:

/customers/getcustomeraddresses?customeraccount=CUST0155148&company=CMP

- D365 F&O request:

- This can be achieved by defining the respective Template Parameters on the API Operation in conjunction with the Rewrite URL policy as presented under point 2 above:

- Having these template parameters defined is also handy when using the Test feature in APIM

3.2 – Security

- Template Parameters + Strip off any additional parameters: the use of Template Parameters as shown under 3.1 point 4 is a strong security measure when combined with a policy to strip off any additional query parameters – For example, if a GET type of API Operation is defined to only allow searching for Customer addresses by Customer account, APIM will not forward criteria which would not comply with this even if the respective backend system like D365 F&O supports it (e.g. Customer name). In doing so, only clients which are aware of actual Account number can fire a search request. A hacker firing calls by potential Customer names will not even be forwarded to the backend as such.

Policy: Strip off Query parameters

API operation > Inbound > Rewrite-URI Policy -> copy-unmatched-parms=false

<policies>

<inbound>

<base />

<rewrite-uri template="/CustomerPostalAddresses?$filter=CustomerAccountNumber eq '{CustomerAccount}' and dataAreaId eq '{Company}'&cross-company=true" copy-unmatched-params="false" />

</inbound>

<backend>

<base />

</backend>

<outbound>

<base />

</outbound>

<on-error>

<base />

</on-error>

</policies>- Mask backend URL: when a call is fired to D365 F&O and Commerce, both return the URL of D365 F&O and the Retail server in the response. For security reasons, we don’t want the base URL to be exposed to the client as this might enable a malicious client to fire a request to the backend directly. With the following policy any base URL in the response is replaced by the APIM base URL:

Policy: mask backend URL

API > All operations > Outbound -> redirect-content-urls

<outbound>

<base />

<redirect-content-urls />

</outbound>Now when D365 F&O or Retail Server return a response, the respective backend URL is replaced by the APIM base URL:

{

"@odata.context": "https://myAPIM.azure-api.net/Customers/$metadata#CustomerPostalAddresses",

"value": [

{

"@odata.etag": "W/\"JzEsNTYzNzgwNjA3Nic=\"",

"dataAreaId": "COMP",

"CustomerAccountNumber": "CUST0155148",3.3 – Authentication

APIM can take care of the authentication to the backend system. This has a couple of benefits:

- Security: sensitive security information is not distributed outside Azure:

- Decoupling Client to APIM and APIM to Backend authentication saves you from having to distribute sensitive information like a Client ID/Passphrase to a client application.

- It is even possible to setup oAuth 2.0 (Client ID/Passphrase) to APIM and separate oAuth 2.0 (Client ID/Passphrase) from APIM to D365 F&O or Commerce Cloud Scale Unit – In this way the access to D365 resources via APIM can be authenticated differently per client. Vice versa, a client can have 1 identity/login for all resources (D365 and non-D365) in your tenant. In other words: a client could login to all relevant resources on your tenant by a single APP ID/Passphrase even though one or more backend systems would not support oAuth 2.0 (!).

- In case a client connects to APIM via API-Key, the API-Key in the request can be stripped off to not come through to the backend system. This ensures the API-Key cannot be intercepted beyond APIM and then be re-used in a new client request:

Policy: strip API-Key header off request to backend

API > All operations > Inbound -> set-header -> “delete”

<inbound>

<base />

<set-header name="API-Key" exists-action="delete" />

</inbound>

- Background rotation, caching and rotation of oAuth2.0 bearer token:

- When APIM is used to authenticate to the D365 backend or D365 Commerce Cloud Scale Unit independent from client authentication, the token for accessing the backend can be cached and auto rotated for faster response times.

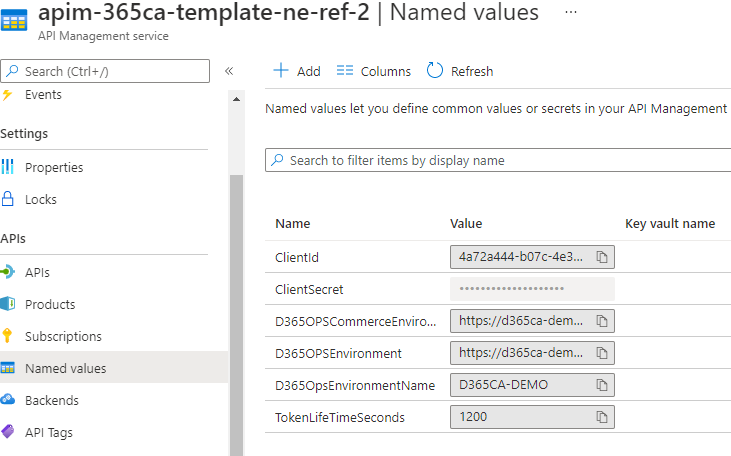

- This can be achieved by a combination of a parameter for Token life time and inbound policy

- Note: the bearer token is always specific for a resource – Hence, my best practice is to save the token into cache by Environment name – In doing so, the token is automatically refreshed when APIM is redirected to another D365 environment

- Note 2: I often save the Client ID and secret in Azure Key vault and use that reference in the respective Named value (see column Key vault name below)

Named Value: Client ID – Secret – Environment – Environment name -Token life time

APIM > APIs > Named Values -> Token life time setting 20 minutes = 1200 seconds

Policy: fetch oAuth2.0 token, cache and re-use

API > All operations > Inbound ->

send-request

set-header

cache-store-value

cache-lookup-value

<inbound>

<base />

<!-- Fetch token from cache -->

<cache-lookup-value key="{{D365OpsEnvironmentName}}-token-{{ClientId}}" variable-name="bearerToken" />

<choose>

<!-- When a bearerToken is not available in cache -->

<when condition="@(!context.Variables.ContainsKey("bearerToken"))">

<send-request mode="new" response-variable-name="oauthResponse" timeout="20" ignore-error="false">

<set-url>{{AuthorizationServer}}</set-url>

<set-method>POST</set-method>

<set-header name="Content-Type" exists-action="override">

<value>application/x-www-form-urlencoded</value>

</set-header>

<set-body>@("grant_type=client_credentials&client_id={{ClientId}}&client_secret={{ClientSecret}}&resource={{D365OPSEnvironment}}")</set-body>

</send-request>

<!-- Store result in cache -->

<set-variable name="accessToken" value="@((string)((IResponse)context.Variables["oauthResponse"]).Body.As<JObject>()["access_token"])" />

<cache-store-value key="{{D365OpsEnvironmentName}}-token-{{ClientId}}" value="@((string)context.Variables["accessToken"])" duration="{{TokenLifeTimeSeconds}}" />

<set-header name="Authorization" exists-action="override">

<value>@("bearer " + (string)context.Variables["accessToken"])</value>

</set-header>

</when>

<!-- If cache value exists -->

<otherwise>

<set-header name="Authorization" exists-action="override">

<value>@("bearer " + (string)context.Variables["bearerToken"])</value>

</set-header>

</otherwise>

</choose>

</inbound>3.4 – Performance

- Retry policy:

- I actually do not prefer to let APIM handle retries to backend systems:

- Requests which can take a long time (say > 10 seconds) may even take longer when initial attempts fail. This can push the client into a time out window.

- Most 3rd parties have their own retry policies incorporated into their API framework. This is for the client to stay in full control over any call.

- If your client application framework does not support re-try policies or you’d like to utilise the advanced options APIM offers for retries, you can enable a retry policy in APIM as per below example:

- Note: after V10.0.13 D365 F&O has throttling enabled for ODATA requests

- As part of this framework, D365 F&O will return status code 429 “Too many requests” in case of request overkill. So we can put a retry condition on that status code to diversify the re-try settings per status code (!):

- I actually do not prefer to let APIM handle retries to backend systems:

Policy: retry requests to backend systems

API > All operations > Outbound -> retry

Note: I would recommend to put the count and interval variables in Named values, so an update to the named value applies to all API operations at once

<outbound>

<retry condition="@(context.Response.StatusCode == 429)" count="3" interval="10" max-interval="" delta="" first-fast-retry="false" />

</outbound>- Caching: responses can be cached

- If a client application is firing the same request within a certain timeframe, APIM can return a cached response as opposed to forwarding the call to the backend system again

- I’ve used this pattern in an E-commerce to D365 Commerce integration scenario: customers tend to click back and forth between pages which can lead to re-loading of the same data multiple times. Returning cached responses was very useful here to reduce load times and number of calls to the D365 Cloud Scale Unit.

- A cached response takes about 45-50ms to be returned to the client, so it leads to improved performance in repetitive scenarios

- Here’s how to implement it:

Named Value: time window to cache a response for

APIM > APIs > Named Values -> Caching duration

Policy: cache a response and return it from cache

API > All operations > Outbound -> cache-store

API > All operations > Inbound -> cache-lookup-value

<inbound>

<base />

<cache-lookup-value key="{{D365OpsEnvironmentName}}-token-{{ClientId}}" variable-name="bearerToken" />

</inbound>

<outbound>

<base />

<cache-store duration="{{CachingDurationInSeconds}}" />

</outbound>

3.5 – Flexibility

- Re-pointing to another environment: since APIM allows client applications to consume Services backend system agnostic, client apps can be linked up with a different backend system without downtime.

- When implemented as descibed under #1 Abstraction above, re-pointing is just a matter of changing the value for parameters D365OPSEnvironment and D365OPSEnvironmentName. Since APIM won’t find a bearer token for that Environment name, it will retrieve a new one automatically.

- With this, it’s easy to re-point a TEST E-commerce website from a D365 F&O DEV box to a sandbox for example

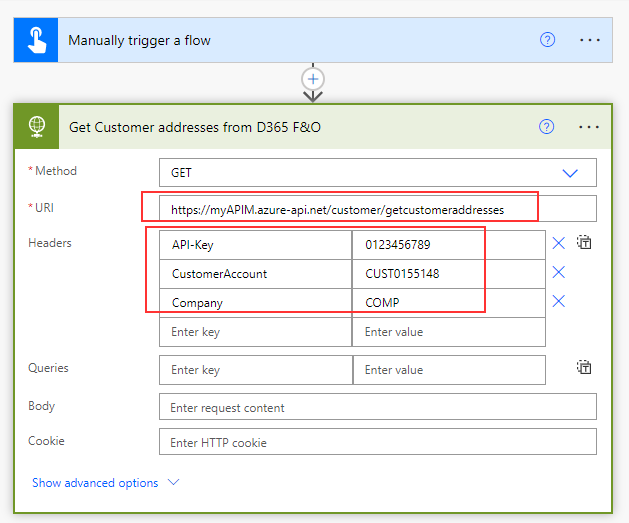

- Using APIM end points in Power Automate, Logic App and Power Apps as opposed to the D365 F&O connector:

- The D365 F&O connector for Power Automate/Logic Apps simplifies integration scenarios for non-techies

- However, using the connector is not too simple due the requirement to come up with the right entity key pairs in update/insert/delete scenarios

- In addition, using the connector requires a User based data connection where APP based authentication might be preferred

- Using an APIM end point as opposed to the D365 F&O connector saves a data connection in the Flow or Logic App orchestration – This makes it more ‘lightweight’ and easier to export/migrate, for example to another tenant

- See below how to create a HTTP request to a D365 F&O ODATA end point to fetch all the address information for a customer via APIM as per the setup described in this blog post – For authentication to APIM, API Key is defined – APIM authenticates to D365 F&O by bearer token as described under #4 – Authentication:

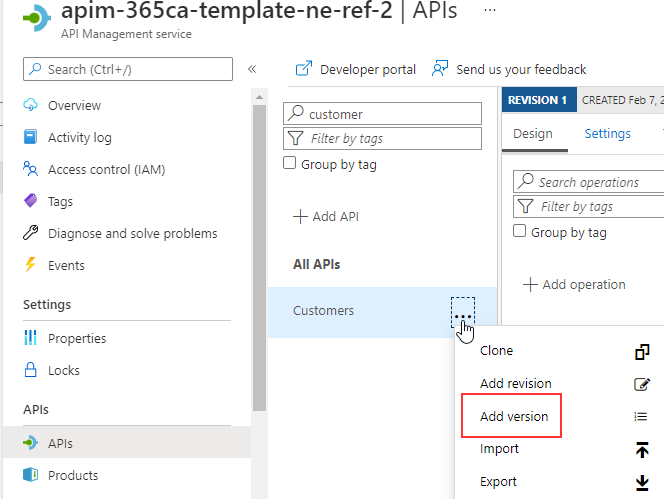

- Version your APIs: when in Production it’s important to have the ability to quickly jump over from one API version to another for patching purposes while staying risk-free. To achieve this you can enable versioning on any API:

- Enable versioning by choosing the option Add version on any of your APIs (see picture below)

- Now, your client application can easily jump over to a new/patched version of your API by a simple URL change: e.g. switching from {APIM URL}/customers/getcustomeraddresses to {APIM URL}/customers/V2/getcustomeraddresses

6 – API Documentation

- Developer portal: Both D365 F&O ODATA and D365 Commerce Cloud Scale Unit ODATA interface frameworks lack Open API/Swagger documentation:

- To fill this gap, Microsoft ship proxy clients for D365 Commerce ODATA and ODATA V4 Client Code Generator tool for Visual Studio which can be used to generate a Client proxy for the D365 F&O backend ODATA service

- However, these proxies only allow Programmatic Integration and are more generic tools which may lack some detail

- When a D365 F&O or D365 Commerce API is described in APIM in terms of template parameters, header parameters, request body, potential status codes etc., this information is automatically published to the APIM Developer portal

- The Developer portal can be fully customised and customer branded, similar to SharePoint sites

- Internal and external users can be authenticated (either via e-mail/password or AAD user login) to access all or a subset of APIs so they become sulf-sufficient in consuming them:

Azure API Management Developer portal: available APIs after login

APIM > Portal overview > Developer portal

Azure API Management Developer portal: API details

APIM > Portal overview > Developer portal

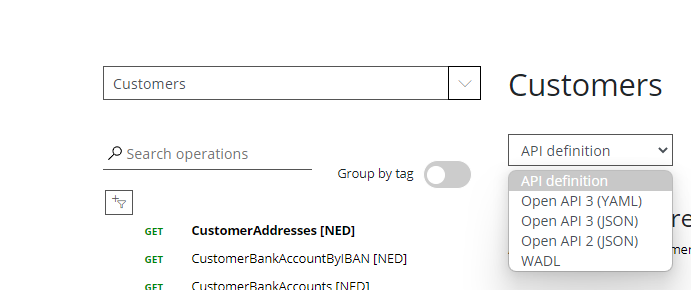

Azure API Management Developer portal: Export to WADL, Open API v2 or v3

APIM > Portal overview > Developer portal

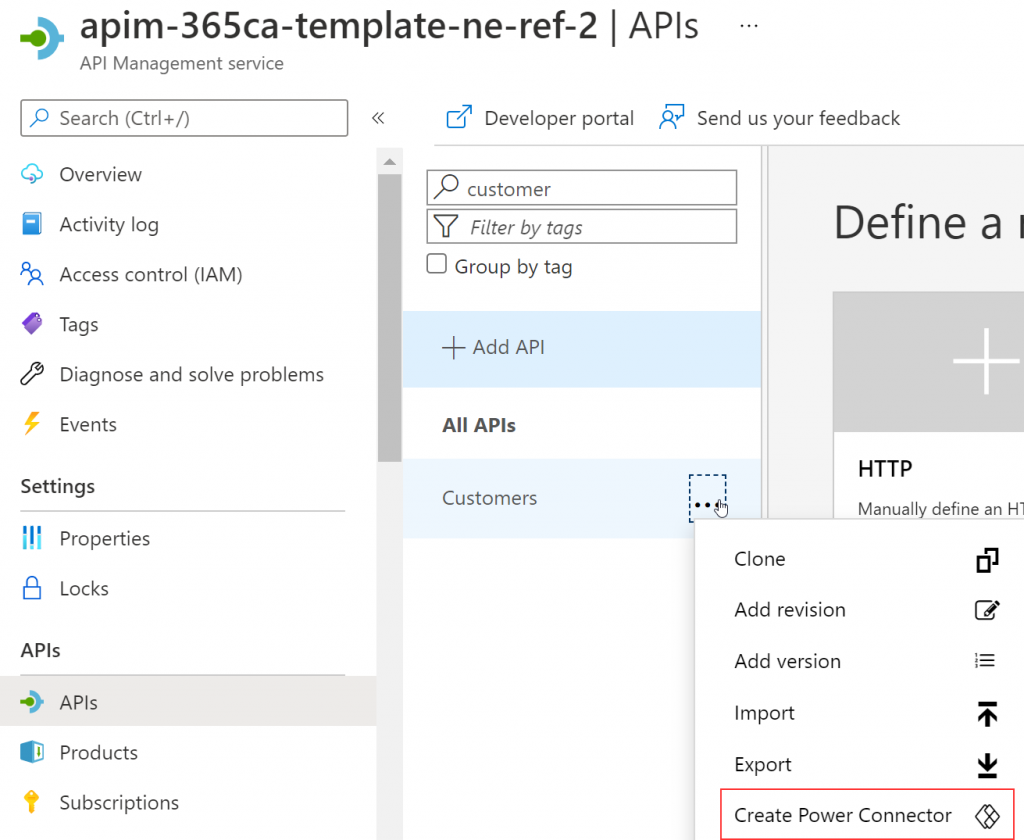

- Documentation in Power Automate – Logic Apps – Power Apps: APIM APIs can be exported as Power Connector so the API documentation is also available in Power Automate, Logic Apps and Power Apps:

APIM: export as Power connector

API > Create Power Connector

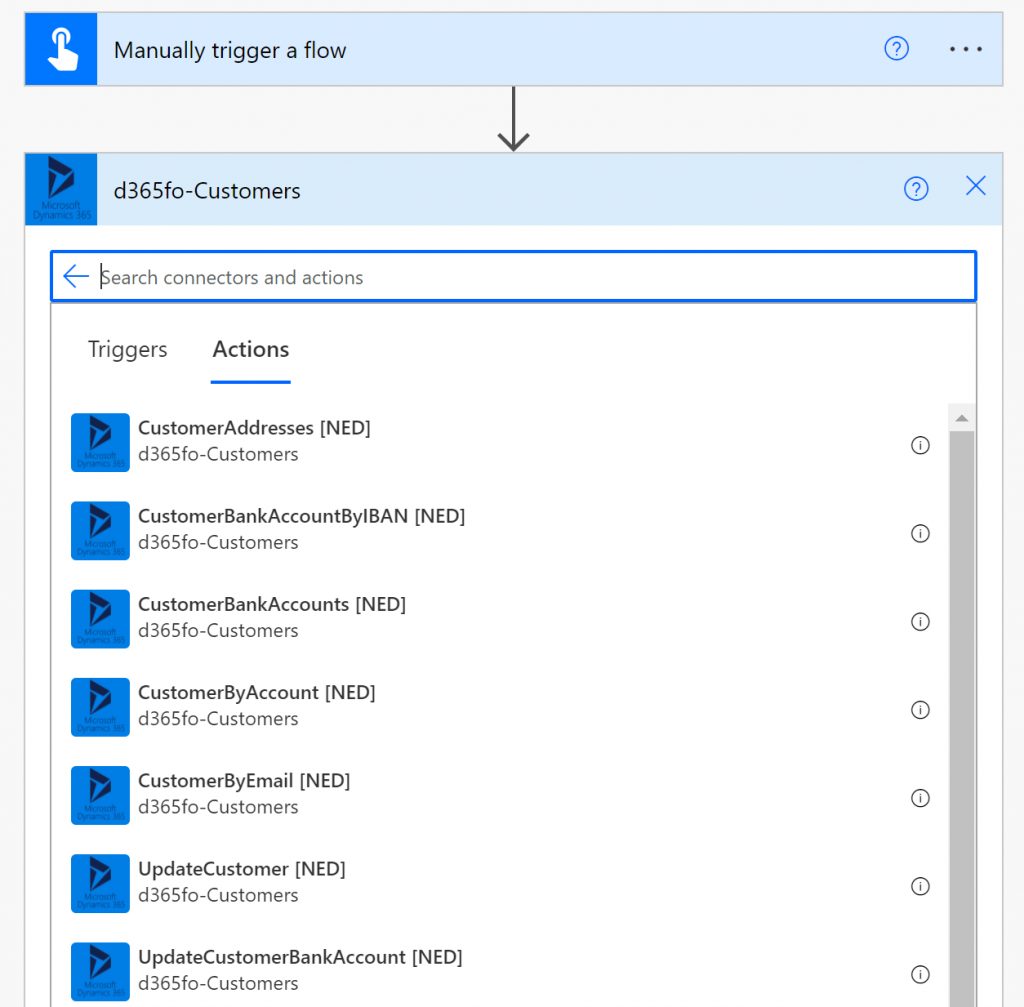

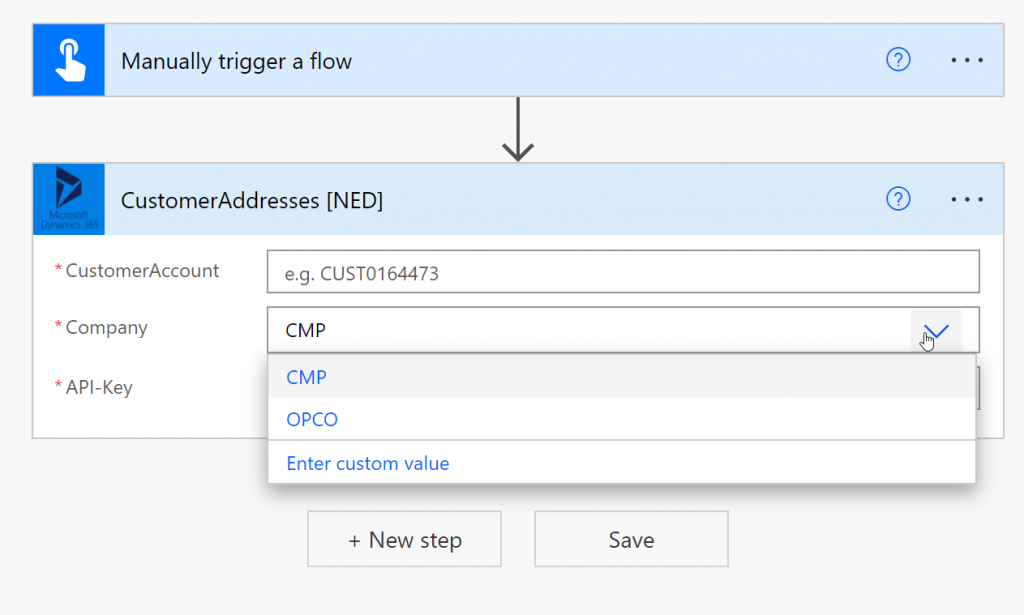

Power Automate – Logic App – Power Apps

List of APIs pulled from APIM definitions

Power Automate – Logic Apps – Power Apps

Custom APIM based connector with PIM documentation

Note: the connector only requires API-Key authentication to APIM as per the respective definition in APIM

4 – Final words

I hope this blog post inspired you to explore the use of Azure API Management for D365 F&O and D365 Commerce interfacing and more. I think APIM can be useful in many scenarios. Fortunately, the cost are relatively low. A customer can already deploy a Production environment for a monthly cost of just over 150 dollar per month.

Warm regards,

Patrick Mouwen

03.09.2022